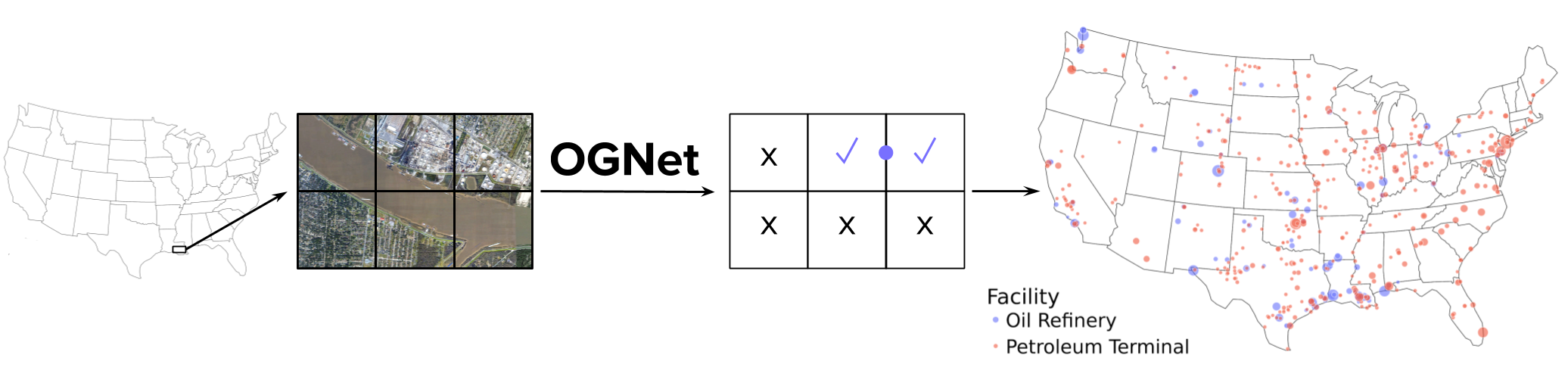

OGNet detected several oil and gas facilities not present in four publicly available datasets.

We compared the manually verified facilities detected by OGNet by combining four publicly available datasets, namely GOGI, GHGRP, HIFLD, and EIA. We removed duplicate records by combining coordinates within 2 km of each other.

We counted the number of reported facilities which were detected by OGNet, and found it detected 73.5% of the oil refineries and 23.9% of the petroleum terminals in the combined dataset. Close to half of the "missed" oil refineries were due to inaccurate locations reported in the public datasets.

We counted the number of detected facilities which neither occur in the combined public dataset nor the training set, and found that OGNet detected 6 new oil refineries (including one abandoned facility) and 142 new petroleum terminals.

Download OGNet Detections (v1.0)Each detection is associated with a latitude and longitude, facility type, storage tank count, and whether the detection corresponds to multiple adjacent facilities.

Disclaimer: This is the first version of the database produced by OGNet and has limitations which should be considered carefully.

- NAIP imagery does not always provide a very recent capture of a location (see NAIP Status Maps). Results could differ with other imagery sources.

- The locations of detections are restricted to the coverage of NAIP, which only captures the continental U.S.

- The locations are not always centered on the facility.

- The storage tank counts are not always exact.